The word ‘Intelligent’ is increasingly being applied to all manner of systems and devices, and whilst there are a growing number of technological developments which add value, there is some debate as to how ‘intelligent’ these options really are. The introduction of the various sub-sets of artificial intelligence technology seems to promise value for end users, but what is AI and machine learning, and can it help create smarter systems?

It is virtually impossible to go anywhere in the smart technology market, including the security and safety sectors, without coming across some reference to artificial intelligence (AI). Indeed, there are some systems which proclaim they are AI-enabled without going into any detail about how the benefits can be realised. It’s as if the goal is to flag the system as AI-enabled rather than solve problems.

The first point to consider when assessing the likely benefits from AI is that finding an agreed definition of what constitutes artificial intelligence is a bit like trying to knit fog! Although AI is a generic ‘catch-all’ description which intimates a level of performance rather than specifying definable and quantifiable capabilities, it has been embraced by many as the leading bullet point to describe their latest device or system.

When AI first surfaced in the 1950s, it was considered the stuff of science fiction. However, as people came to understand how technology was achieving its goals, they dismissed the thought that machines could show intelligence!

Big Blue is a great example. The chess playing computer was developed by IBM, and went on to become the first computer to defeat a reigning world chess champion in a chess game using standard timings. Hailed as the pinnacle of artificial intelligence, IBM was even accused of cheating to take the win. However, when people realised the computer has simply searching massive data sets to find relevant moves, the illusion of ‘intelligence’ was removed.

Over the years, our expectations of AI have changed. As people come to understand how the processing is achieving its goals, it ceases to be considered as AI. While this does drive innovation, it also makes a true understanding of what AI is and what it is not hard to pin down.

It could be argued that any device or system using two or more cross-referenced sources of data to define whether or not an event has occurred is ‘intelligent’ when compared with one that uses a single source of data. However, most people associate the AI description with systems that make decisions autonomously, based upon a wide variety of criteria either gleaned from multiple data sources or compiled by the system itself.

Such AI systems may well be computationally powerful, but the decisions being made are typically not based upon real-time assessments of the events unfolding, nor are they based upon experience or training. Typically, the decisions are either based upon alphanumeric values such as pixel changes, or values derived from running mathematical algorithms. In some cases, they are solely decided by searching huge data-sets for a prescribed solution.

Rarely do the systems assess how the changes correlate with known and established patterns, such as predicted site status, an assessment of objects activities in the live scene, or expected behaviours.

Rarely do the systems assess how the changes correlate with known and established patterns, such as predicted site status, an assessment of objects activities in the live scene, or expected behaviours.

While the majority of these systems are certainly complex and highly advanced, they are looking for known or predicted patterns and taking pre-defined actions as a result.

These systems may not be intelligent in the true sense of the word, but this isn’t to say that they’re dumb. The solutions offer a wide range of flexibility and can introduce high levels of added value for security, safety, site management and business intelligence.

However, what many of the established systems and their deployed algorithms lack is the ability to classify objects or define scenarios and apply relevant discriminations based upon data or information learned – either from teaching or experience – when deciding how to manage incidents.

If an event occurs, these mainstream systems will report it if they have been programmed to do so. If the events occur every day, the systems will report them every day, even if an operator repeatedly clears down the notification without taking action. The systems will not learn to ignore the events, unless they are specifically reprogrammed to do so by an operator or integrator.

Also, if an unexpected event occurs which the systems are not programmed to detect, they will not see this as an anomaly and as a result won’t present them to the operators, nor will they learn how to deal with similar events in the future based upon the operators’ responses.

Even today’s more advanced technologies often rely upon the use of programmed and clearly defined behaviours – either inputted by the integrator or user, or defined by the manufacturer – to check for violations and exceptions.

Understanding exceptions

The ability to be taught what is normal and what is exceptional is the basic foundation for intelligence in terms of how a system operates. If a system can recognise objects, detect behavioural patterns and then apply a degree of reasoning based upon the context of the gathered information, it is closer to being ‘intelligent’.

In terms of Machine Learning – which is a sub-set of AI – huge data-sets are fed into a computer. These are used to provide a wide range of criteria against which a machine can assess a situation. Depending upon the inputs it receives, it will select a relevant output. This is based on prediction algorithms. In many applications, Machine Learning will be sufficient for most tasks.

However, it must be remembered that with Machine Learning, if the system does not have the appropriate data, it is unlikely to be able to deliver the expected results. Machine Learning is a process, and expecting systems to ship with all relevant data isn’t always a good thing.

However, it must be remembered that with Machine Learning, if the system does not have the appropriate data, it is unlikely to be able to deliver the expected results. Machine Learning is a process, and expecting systems to ship with all relevant data isn’t always a good thing.

Machine Learning is a more flexible sub-set of artificial intelligence, but is heavily dependent upon input data. Thanks to numerous Hollywood productions, CSI-type television shows and a general misunderstanding of the way in which technology works, many end users believe the ability for Machine-Learning systems to gather data independently, assess and understand it, and then apply reasoning based on the data is already with us. This can actually be a negative, because it means their expectations for advanced technologies are much higher than they should be.

This can also be a stumbling block for integrators who are tasked with designing and delivering intelligent systems. Whilst many in the smart systems industry understand the technologies require varying degrees of training and the supply of relevant data sets, the end user can sometimes drastically underestimate the need for such set-up and teaching.

However, if the right approach is taken, and expectations are tempered, there is a sub-set of Machine Learning which moves things even closer to the generally understood concept of intelligent systems: Deep Learning.

The neural connection

For a good few years, neural networks, and more latterly deep learning, have been buzz-phrases associated with the more significant advancements in the field of Machine Learning. Many academics will point out that deep learning is merely neural networking revisited. In the past, some manufacturers have claimed to offer systems based upon neural networking, but because of limitations on processing capabilities at the time, these options rarely made an impact or delivered the levels of performance promised.

As with many AI-based technologies, neural networking was heralded on the basis of what it could ‘theoretically’ achieve, long before it had the capabilities to get close to the promised results. If anything, the neural networking bubble burst – from the point of view of many end users – before it even got going.

As with many AI-based technologies, neural networking was heralded on the basis of what it could ‘theoretically’ achieve, long before it had the capabilities to get close to the promised results. If anything, the neural networking bubble burst – from the point of view of many end users – before it even got going.

It’s interesting that many innovators working with neural networks switched their terminology to Deep Learning when the processing power became available to actually deliver on the claims. It allowed them to disassociate their solutions from the issues of the past. Unfortunately, the clamour to claim some level of Deep Learning has seen some drifty back to referring to their solutions as neural networking.

In truth, it matters not which terminology is used. For integrators and their end user customers, the important point is that thanks to advances in IT resources, modern solutions can make use of the technology, and deliver real-world benefits without the need for excessive budgets.

Deep Learning, or neural networking, is based upon the use of multiple cascaded layers of algorithms. Each layer makes use of input and output signals. Effectively (and in simple terms) a layer will receive an input, which it will then modify and pass on as an output. Each subsequent layer treats the output of the previous layer as its input. Deep Learning is so-called because it makes use of multiple processing layers. Each layer can ‘transform’ the input it receives based upon the parameters for which it has been trained.

The key here is training. Rather than simply following a defined set of rules, the advanced processing replicates the way neurons work in the brain. Certain inputs can be given greater priority than others, and the system can decide whether inputs are of value or not.

By breaking tasks down and filtering specific information, Deep Learning enables machines to perform complex tasks in a manner similar to the human brain.

While standard systems tend to look for defined values for input data, Deep Learning systems can use pixel values, edges, vector shapes and a host of other elements – visual, audible or data-derived – to classify objects or comprehend behaviours and react accordingly. This is achieved by effectively training the algorithms with significant data sets. While Machine Learning requires data sets to reference, Deep Learning is trained by processing the data.

While standard systems tend to look for defined values for input data, Deep Learning systems can use pixel values, edges, vector shapes and a host of other elements – visual, audible or data-derived – to classify objects or comprehend behaviours and react accordingly. This is achieved by effectively training the algorithms with significant data sets. While Machine Learning requires data sets to reference, Deep Learning is trained by processing the data.

By way of an example, if a standard system deploying IVA is searching for vehicles, the parameters used will typically be size, shape and possible speed and location. If a roadway has been defined, it expects to see vehicles there. Of course, the integrator needs to enter size and shape parameters, along with speed and direction information. They also need to define detection zones. In short, the system must be told what to look for, and if objects detected match the programmed description, they are likely to be a vehicle.

If, during a storm, a large cardboard container (or other object of a similar size to a small car) was to be blown along the road, the system would create an alert. This is because the object is the size and shape of a vehicle, and is on the roadway, moving in a defined direction.

When a Deep Learning system is trained, it will be shown datasets of pictures and videos of vehicles. These will include a huge variety of vehicles from a range of angles, all performing in different ways. The only consistency will be the presence of vehicles. By using the depth of processing, the system will learn what characteristics are common with vehicles. It will understand vehicles have lights, wheels, doors, windows, etc.. It will recognise that light reflects differently off the windows of travelling vehicles.

Whilst it will still look for size, shape, speed and directional information, it will further analyse detected object to ensure they have the characteristics of a vehicle as well.

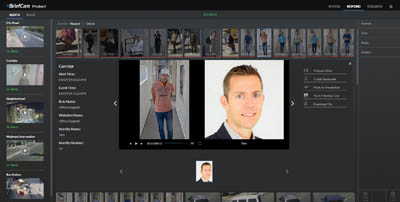

The real power of Deep Learning becomes evident when a suspicious vehicle is detected. The system can then learn specific features of the car (colour, exact size and shape, model-specific features, even dirt or damage) and search across an entire estate of cameras, even those on separate systems.

Within seconds in can deliver results, and the user can filter those by ‘accepting’ images of the right vehicle. This data is used to better filter results. Alternatively, the user can search for red vehicles, for example, in a given time window and location. Once the suspicious vehicle is found, it can be used to drive filtered searches.

With a standard system, the best you can hope for are hundreds of vehicles, maybe filtered by all the possible shades of red.

The technology community has worked to train Deep Learning-based systems to recognise objects with accuracy. Not only will a correctly trained system differentiate between a quadruped animal and a human on their hands and knees, it will also identify if the animal is a dog, horse or other creature if it has been trained to recognise these.

The training can also include behavioural traits, identification of individuals, unexpected or unusual activity, etc.. This is important because it allows smart systems to be deployed with much less incident-specific configuration by the integrator. Instead systems present end-users with a range of events and incidents. The user can then decide which of these are important and which are innocuous. By filtering the results, the system will only identify events of interest.

The bulk of training a deep learning system occurs before it is made available to the industry. Manufacturers will ensure the core learning has been implemented. However, the technology continues to be trained while in use. If it detects an object, activity or an event that it has not seen before (and which is therefore an exception), this can be flagged and presented to the end-user. They can then decide whether or not the system should continue to notify for such occurrences or ignore any similar incidents in the future.

It is important to understand that Deep Learning is an intelligence-based technology, and has a huge number of applications. Experts estimate that in the future up to 80 per cent of service-oriented jobs will be disrupted by the introduction of systems using Deep Learning technologies.

For smart applications, it is vital the technology is properly implemented. This makes it very important to work with manufacturers who have an established track record in delivering advanced technologies.

In summary

AI makes a number of systems more effective and efficient, and better able to deliver the expected level of performance. It also enables integrators to work more closely with their customers, and for those customers to realise greater benefits from their investment. AI is not a silver bullet, but it does elevate standard systems to smart solutions.