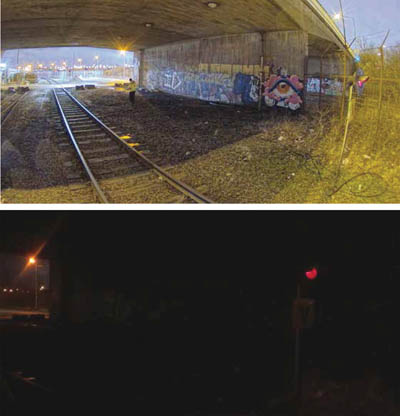

One of the major challenges in around-the-clock video surveillance is low light image capture. The vast majority of security-based surveillance applications are in action 24 hours every day, and given that many crimes take place in the hours of darkness, protection during this period is vital. Additionally, as many sites operate all hours, the added value of video enablement needs to be effective in all conditions. There are a number of ways to improve on video performance in minimal light situations, but which gives the best results?

Modern video-based systems are sought after by end users for two reasons. The first is that video plays a significant role in the security of a site and protection of people and assets. Whether used to record footage for analysis after an event, or increasingly as a proactive tool to identify threats and instigate actions, video surveillance is well proven as effective and reliable.

Increasingly, end users are also using video data as an enabler when it comes to business intelligence, building management and delivering site status information in real time. Video has become understood and appreciated by a growing number of businesses and organisations, and its increased use by systems and solutions outside of the security market is continuing to raise interest in what the technology can offer in the way of reporting.

By its very nature, security-based video surveillance needs to work 24 hours a day, seven days a week. It needs to be able to perform efficiently and consistently when risks of an attack or event are at their highest. In short, when the risk increases, reliance on video surveillance becomes more important for those who have invested in surveillance technology.

In many risk-based scenarios involving burglary, theft, attacks against people or property or other criminal activity, risks increase when light levels fall. It’s a simple formula: criminals want to evade detection and identification, and therefore there is less chance of them being spotted by passers-by or persons at a site if it is dark. Additionally, most sites are less populated – or even unpopulated – during the hours of darkness.

In many risk-based scenarios involving burglary, theft, attacks against people or property or other criminal activity, risks increase when light levels fall. It’s a simple formula: criminals want to evade detection and identification, and therefore there is less chance of them being spotted by passers-by or persons at a site if it is dark. Additionally, most sites are less populated – or even unpopulated – during the hours of darkness.

The practice of flooding an area with white light has fallen from favour for a number of good reasons. First, concerns about light pollution have meant many sites need to take their responsibilities seriously.

With a growing number of councils implementing ‘dark sky’ policies, enforcement action is being brought against businesses who flout the guidelines. Second, lighting up large areas of a site throughout periods of darkness is not a cost-effective solution. It is not just the cost of electricity which companies consider, but also the impact such a step makes on their overall carbon footprint.

Finally, passers-by viewing a well illuminated site will often assume that any activity is innocuous, as a well lit site is indicative of one that is operational.

As a result, it is not unusual for security-based video surveillance to contend with delivering video streams for security purposes – detection and identification – in circumstances which are often far from ideal. Typically, light levels will be minimal, which forces integrators and installers to consider a wide range of design criteria to ensure specified cameras can capture images to an acceptable level of quality.

Of course, it’s all too easy to ignore the important criteria when today’s cameras boast outstanding performance in low light applications. If a camera can operate in 0.001 lux, how much additional light is required? In many cases, the specified low light performance doesn’t tell the full story. In some cases, it doesn’t even scratch the surface.

Sensitivity specifications

For those trying to assess how well a camera will perform in low light applications, there isn’t much point in looking at quoted sensitivity specifications. The criteria for judging sensitivity was never a standard, although there was an industry ‘understanding’ of how these figures should be produced. This was based on the minimum light level required to create a 1 volt peak-to-peak video signal, with certain conditions dictating where the measurement was taken.

As competition increased, some manufacturers started to measure in different ways. Some measured the light level at the viewed scene rather than the camera face plater, others opted to quote figures for lower strength signals. A few manufacturers would mention the variances, by many did not.

As competition increased, some manufacturers started to measure in different ways. Some measured the light level at the viewed scene rather than the camera face plater, others opted to quote figures for lower strength signals. A few manufacturers would mention the variances, by many did not.

The measurement definitions given by some soon disappeared, and before long there were dozens of different ways of measuring sensitivity, none of which allowed a clear comparison to be made.

For many years, sensitivity figures have been something of a nonsense. As mentioned, there is not, and never has been, a standard for how sensitivity is measured. What drives the specifications is often tender documents.

Specifiers or consultants will often identify a camera which they believe will fill the brief. In the interests of impartiality, they will not identify the device, but instead will use its technical specification for the tender document. On some occasions they might just lift the specs from a previous document for a site with similar needs.

If the selected device’s specification comes from a manufacturer which quotes sensitivity in a different way to others, then similar or better performing cameras will not seemingly meet the specification. In a way, this pushes other manufacturers to adopt methods of measurement which provide the lowest figures.

The loser of this approach is the integrator, because what should be a very useful specification has become a figure which often doesn’t help to indicate the level of performance achievable in low light conditions.

Vital light

Light is an essential element for the generation of any video image. If there is no light, there is no video image. You simply cannot get around that fact. If no light falls onto the sensor of the camera, no electrical signal can be produced. Cameras need light, at a certain level, if they are to produce anything in terms of a usable image.

The debate around sensitivity always falls to that one single consideration: usable images. What is usable for one application may well be unusable for another. There are basic and cost-effective ways of improving image quality in low light, but often these are not suitable for security applications, or even for supplementary uses such as site management, flow tracking, etc..

The debate around sensitivity always falls to that one single consideration: usable images. What is usable for one application may well be unusable for another. There are basic and cost-effective ways of improving image quality in low light, but often these are not suitable for security applications, or even for supplementary uses such as site management, flow tracking, etc..

In the past few decades, the video surveillance sector has seen a lot of technological advances; it could be argued it has developed more than any other security discipline. Manufacturers have R&D teams working around the clock to deliver an ever higher level of performance to those seeking to deploy video systems. Resolutions have been raised from 330 TV lines to today’s HD and 4K UHD streams, with higher multi-megapixel devices also available.

Resolution hasn’t been the only advance. Functionality has also increased. Previously surveillance cameras offered a few basic image manipulation functions. Additional features such as WDR, privacy masking, multi-streaming, VMD and IVA all started out as select options on top-of-the-range devices, before becoming standard features.

It is fair to say that over the years, video surveillance has developed significantly in terms of performance. If you look at the specifications for products over this period of change, you’ll also see a significant shift in figures quoted for sensitivity.

While it would be wrong to state that many of the reductions had more to do with changes in how sensitivity was measured than technological advances, things have changed in more recent times. The advances in processing have not only allowed cameras to stream higher resolutions at full frame rates whilst also offering a wide range of features and functions, but also help boost performance in low light applications.

The most basic approach to low light image capture was often to implement slow shutter speeds or use frame integration technologies. Such approaches must be treated with caution, as it is easy to demonstrate the performance by showing a static scene, where the image will be detailed and sharp. However, as soon as motion is introduced, blur and smear become obvious.

When fast motion occurs with slow shutter speeds, at times it can be difficult to ascertain what passed through the scene, and it would be impossible to achieve identification of an individual for evidential purposes. While many cameras feature some level of adjustable shutter speed or frame integration, they are not suitable for many low light applications.

Increasing aperture sizes does allow a greater level of light to fall on the camera’s sensor, but at the cost of depth of field. This can be critical if a camera is covering a large open area. Again, while this can be used to some effect in a few applications, it’s not considered the best approach.

The market leaders in camera technology are providing steps forward in low light performance. This comes as a result of several enhancements which, when combined, can deliver an increase in image quality without introducing compromise. Obviously, over-processing images can result in unwanted anomolies, and in the past some of the low light functions required a delicate balance as on-board processors were pushed to their limits. Todays more powerful processing engines are better able to carry out the load balancing themselves, making things simpler for integrators and installers.

The first benefit is the use of precision sensors. As with most IT and technological components, CMOS image sensors are advancing and quality is being improved. There is also a trend towards optimising chipsets for the challenges of surveillance. This is more likely to happen with manufacturers who are involved in the manufacture of chipsets, although economies of scale do mean many brands now use the same or very similar sensors.

Sensors are electro-optical devices which are populated by arrays of light sensitive picture elements (pixels), which convert the light into electric signals based upon its characteristics. The more sensitive a CMOS sensor, the better it will perform in low light applications. It stands to reason that this increased sensitivity also impacts on cost, so the better sensors are typically found on higher-end devices.

Combining a sensitive image sensor with an advanced image signal processor (ISP) is also vital to deliver good quality images in challenging conditions. As with CMOS sensors, there are ISP modules which have been optimised specifically for the delivery of video surveillance in hostile conditions.

Roles performed by the ISP include colour balance, noise reduction, white balance, exposure control, gamma correction, dynamic range, etc.. Again, the leading manufacturers will have optimised these algorithms for surveillance needs.

With higher resolution cameras, the pixels get smaller, which means it becomes critical that a signal can be created from very low levels of light. Achieving this without introducing noise is critical. When a weak signal is amplified, the noise is also boosted.

Thankfully, the leading cameras can apply filtering which removes much of the unwanted noise while maintaining critical video data, retaining required forensic information. When the digital algorithms have been correctly tweaked by the manufacturer, cameras can deliver a high level of performance in most light conditions.

A question of focus?

The major challenge with low light performance is getting a sufficient level of light to fall on the individual pixels which make up the camera’s sensor, and this becomes more difficult as resolutions rise. This has some grounding in basic physics.

Theory indicates achieving consistent low light performance with higher resolution devices will often be more challenging than it is with lower definition cameras. The reason higher resolution cameras can sometimes struggle with low light performance has to do with pixel size and the density of pixels on a chip.

Theory indicates achieving consistent low light performance with higher resolution devices will often be more challenging than it is with lower definition cameras. The reason higher resolution cameras can sometimes struggle with low light performance has to do with pixel size and the density of pixels on a chip.

Image sensors require light to fall onto the individual pixels to create a charge which sets the relative values for each individual picture element. If you consider a HD720p camera chip, it contains just under 1,000,000 pixels. In effect, this means that the surface of the chip is divided up into around 1,000,000 picture elements. Onto each one of these, a sufficient level of light must fall to create a signal every time a frame of video is created.

The strength of the light dictates the level of the signal, which in turn decides the value of that pixel. Indeed, the pixels also have separate elements for red, green and blue.

The size of the picture elements affects the low light capabilities of the camera, which is why 1/2 inch sensors are typically better performers in low light than 1/3 inch sensors.

With this in mind, consider that a 4K UHD camera has around 8,000,000 picture elements, often on a sensor which is the same size as an HD720p component. Because the pixels are subsequently so much smaller, getting the required level of light to fall on each element is an increased challenge.

Whilst we can accept processing power has gone through the roof, enabling the development of enhanced feature sets in the better cameras, and intelligent signal boosting and light scavenging features add benefits, these functions only work well if light is accurately focused onto the sensor.

One solution lies in the use of quality lenses. It is not uncommon for integrators to select a high quality camera capable of delivering decent low light performance, and then use an average lens. The result could be the additional low light performance in the higher quality camera is restricted, as the lens does not deliver enough accuracy.

In tests using high quality correctly specified lenses, pitched against lower specification general purpose optics, cameras fitted with higher quality optics consistently delivered increased quality in regard of fine details and enhanced low light sensitivity.

The truth is lenses are not perfect. They are only as good as they need to be!

One of the more costly stages when manufacturing a lens is the precision grinding of the glass and setting up the lens combinations. The more precise the grinding and accurate the placement, the longer the process takes, and the more care required in the manufacturing process.

It stands to reason that if a lens is intended for use on a mainstream surveillance camera, there is no point in investing time and effort in grinding the glass or building the lens to a higher tolerance. Therefore, when the lens is manufactured, it only need be made as accurate as required for its intended use.

Where high levels of detail are required in low light applications, a high quality lens can increase the accuracy with which light is focused onto the pixels, thereby improving image quality and ensuring the camera’s processing engine has the best possible quality images to start with.

Another important point is to ensure selected lenses are rated for use with IR light if additional infrared illumination is being used. White light and infrared light have a slightly different focal point. This is because the wavelength of infrared light causes the refraction in the lens to be at a different angle. The result is a need for focus changes in day and night conditions. An IR coating corrects this and allows a single focus setting for all conditions.

High quality lenses do carry a slight price premium over standard lenses, but the difference is less than the increase needed to but a camera with a significantly higher sensitivity. Therefore, selecting a quality lens is a cost-effective move to enhance low light performance.

More light?

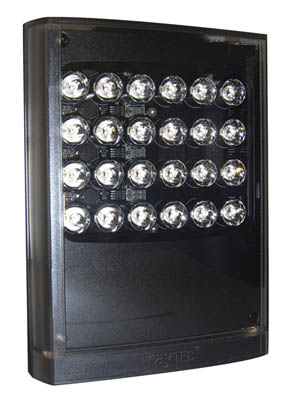

If integrators and installers are seeking a simple formula to make managing minimal light applications a breeze, there is something else which can be considered: add more light. Nothing does the job better than implementing additional illumination. Today’s illuminators are cost-effective, energy efficient, easy to install and commission, and are available in a huge variety of options.

Although cameras using integral illuminators are very popular and can be beneficial in some applications, there is a good case to be made for the use of dedicated illuminators. Because of size limitations, integral LEDs can lack sufficient power to be useful over extended ranges. Longer distances are available from some PTZ cameras, because the dome housing allows for larger LED arrays. With static cameras, there often isn’t enough room to fit sufficient LEDs to provide an increased distance.

Although cameras using integral illuminators are very popular and can be beneficial in some applications, there is a good case to be made for the use of dedicated illuminators. Because of size limitations, integral LEDs can lack sufficient power to be useful over extended ranges. Longer distances are available from some PTZ cameras, because the dome housing allows for larger LED arrays. With static cameras, there often isn’t enough room to fit sufficient LEDs to provide an increased distance.

Tests have shown many devices with integral illumination suffer from light pooling at the extreme of the specified range. Often the field of view is larger than the coverage of the light. This is because a narrower angle of light will deliver a longer range.

Cameras with integral illumination often have the LEDs mounted around the lens, because it is a simpler manufacturing option. This can result in issues with camera siting.

With discrete illuminators, range can be specified for the specific site, with extreme distances still achievable. Also, there is no issue with pooling, as differing angles of coverage are available. Dedicated illuminators also allow the power output to be adjusted, ensuring the coverage is consistent throughout the field of view.

Importantly, dedicated illuminators can be positioned away from the camera and any reflective surfaces, allowing them to illuminate the viewed scene without flare or reflections.

Dedicated illuminators can provide consistency throughout the specified range and across the entire field of view, along with reduced noise and therefore lower bitrate needs.

Where light pollution isn’t an issue, white light allows colour information to be retained. Also, white light can be used to help with site management issues during the winter months when an application may be open for business in hours of darkness.

Many standalone illuminators make installation a simple task. Most manufacturers offer PoE versions of their illuminators, and with a number of cameras offering RJ45 power outputs, connecting an illuminator via an edge device makes financial sense.

Adding illumination is also cost-effective solution if you consider the price/performance ratio. Most camera ranges increase in cost as processing functionality is added. Seeking the best low light performance pushes model choice towards the top of the range. However, often the standard models will be suitable if illumination has been enhanced, thus offsetting some of the cost of an illuminator.

Adding illumination delivers significantly better low light performance for average cameras. Where legacy devices are in use, adding illumination can be more cost-effective than upgrading cameras.

In summary

Dealing with minimal light in security environments has been a challenge for many years, and going forwards it will remain a challenge. The bottom line is this: without light, you have no video.

That said, the leading manufacturers are pushing low light performance to levels that previously haven’t been possible, and clean colour video at low lux levels is now a reality.

Image signal processing advances have pushed the boundaries in terms of performance, and the increased use of high quality CMOS sensors allows significant improvements to be realised.

Add in high quality optics and again performance improves. Plus, you could always add more light.