The modern security market enjoys an embarrassment of riches when it comes to the creation of smart solutions, allowing a wide range of added value benefits to be realised using the available technology. Despite this, implementing systems that solely deliver security-based performance still represents a significant proportion of applications. IVA – intelligent video analytics – gives the ability to detect anomalies and filter out nuisance alarms or innocuous activity, in a cost-effective package. Benchmark considers the role of IVA in security management.

The benefits of IVA (intelligent video analysis) are manifold, and while they cover both security and business intelligence applications, the role of video analytics as a security detection tool remains high. It must be remembered that for many years, most businesses and organisations had video systems installed for one reason: security surveillance. It has only been as the technology evolved to offer greater flexibility that business intelligence has grown in popularity.

Despite the additional benefits of video, it still remains a very effective technology when it comes to detection. The power of video is well understood, and the differences between today’s networked systems and the ‘closed circuit’ options of a decade ago are significant. Video was a reactive tool, allowing users to view footage of incidents after they had occurred. It helped make sense of incidents rather than preventing them. There was (and still is) a deterrent effect with such installations, but the reality is traditional video surveillance doesn’t help prevent incidents.

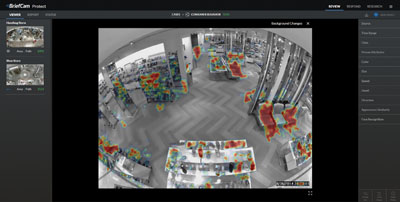

Today’s advanced solutions are very different. They are increasingly being used in a proactive way, helping to detect incidents and threats, even spotting trends, which allow businesses and organisations to take action before an incident occurs. Additionally, today’s technology allows the user to quickly gather and assess all relevant data, without the need to waste time searching for valuable information amongst a sea of footage containing little or nothing of interest.

Advances in processing and data management have resulted in significant advances in video analytics. Where once many of the IVA options were either basic and affordable or efficient but costly, today’s IVA choices deliver enhanced capabilities in cost-effective packages. Much of this is due to advances in processing, such as the increased use of GPUs, the advent of AI and deep learning, plus an increase in service-based video analytics.

Despite this, there can still be a somewhat simplistic approach to IVA as a security tool, which sees many of the benefits available from modern solutions minimalised. For customers, this equates to a lower return on investment.

IVA flexibility

It is not uncommon for intelligent video analytics to be deployed in a somewhat linear fashion. If there is a secure area and the operational requirement is for intruders to be detected, a simple intruder detection analytic is used to identify whenever people enter a defined area. Similarly, if a perimeter requires protection, often a line-crossing algorithm is used to create a virtual fence, identifying whenever someone crosses from an insecure to a secure area. Loiter detection is used to detect people loitering in vulnerable or high risk spaces such as near ATM machines or public facilities which might be unmanned.

While it must be said that these uses are obvious and simple, and in many applications will provide the required level of protection, it doesn’t tell the full story in terms of the potential flexibility on offer from IVA.

While it must be said that these uses are obvious and simple, and in many applications will provide the required level of protection, it doesn’t tell the full story in terms of the potential flexibility on offer from IVA.

Where once video analytics was often limited to the use of one IVA rule per video stream due to a lack of processing power, this is no longer the case. Whether IVA is implemented at the edge (such as directly on a camera or encoder) or is server-based in a central location, the available processing power allows multiple IVA implementations on the same video stream which can add a layer of alarm filtering, thus enhancing the credibility of the solution.

For example, if using simple intrusion detection, the IVA will generate an alarm if a person (or vehicle, dependent upon the selected target classification) is sensed in the defined alarm zone. In simple applications, this might be enough, but by combining the algorithm with other options advanced filtering can be added.

For example, by linking an intrusion detection zone with a directional line cross algorithm, the system will be able to differentiate between a person (or vehicle) entering the zone from an insecure area outside of the perimeter, as opposed to a target entering the zone from a secure area, such as if they are leaving the site rather than entering it.

Equally, it can be established if someone entering the detection zone from an insecure area is being met by someone entering from a secure area. Whilst still a simple alarm event, the additional algorithm makes effective filtering a real possibility.

Combining different IVA configurations also allows actions to be prioritised or adapted to specific circumstances. For example, with the previous example, it would be possible to set different outcomes for each scenario.

Someone entering the detection zone from an insecure area might trigger real-time video recording and create a bookmark for the event, whilst also sending a push notification including a video clip to an on-site operator. However, someone entering the detection zone from a secure area might simply have the footage flagged with a bookmark, or footage would be recorded in real time.

Layering IVA algorithms allows varying criteria to be set for alerts meeting different threat levels, adding flexibility to the overall design of a solution. IVA triggers can also be used to ‘activate’ other IVA algorithms, thus acting as a Stage 1 and Stage 2 alarm. This ensures any Stage 2 activations are only treated as alarm events if preceded by a Stage 1 alert.

The flexibility on offer from modern IVA solutions is also being further enhanced as the use of artificial intelligence (AI) increases.

A smarter approach

Advances in processing, coupled with the increased use of GPUs, is creating more advanced IVA systems. Firstly, the growth in AI and deep learning means that configuring IVA – a vital and often time-consuming step – is being made simpler, with a number of effective self-learning algorithms finding their way to market.

More complex IVA applications need to be set up correctly, and as site conditions change the IVA may need tweaking. The result can be a slow and costly configuration period. However, by using AI and deep learning, the IVA can filter out nuisance activations as it can be taught about everyday activities and what constitutes exceptions. This could save time and money, and reduce the investment required for system set-up if the system has been ‘trained’ correctly.

AI, neural networking and deep learning are current buzz-phrases associated with machine intelligence. In the past, some manufacturers claimed to offer smart systems based on neural networking, but processing limitations meant these options often failed to deliver the promised level of performance.

Deep learning makes use of multiple cascaded layers of algorithms. Each layer utilises input and output signals. Effectively (and in simple terms), a layer will receive an input, which it will then modify and pass on as an output. Each subsequent layer treats the output of the previous layer as its input. Deep learning is so-called because it makes use of multiple processing layers. Each layer can ‘transform’ the input it receives based upon the parameters it has been taught.

The key here is teaching. Many people talk about how AI systems learn, but this can confuse some users. Deep learning makes use of large datasets. For example, if you want to teach a system to identify a vehicle, such as a car, it needs to be shown tens of thousands of images and video streams containing cars, from a variety of angles. The result is the system is taught the characteristics of a car. It can then, with some degree of certainty, identify cars in video streams.

Rather than simply following a defined set of rules and criteria, the high level processing used by deep learning replicates the way neurons work in the brain. Certain inputs can be given greater priority than others, and the system can then calculate whether inputs are of value or not.

By breaking tasks down and filtering specific information, deep learning enables machines to perform complex tasks. While standard IVA systems tend to look at pixel values for input data, deep learning systems can use pixel values, edges, vector shapes and a host of other visual elements to ‘recognise’ objects. This is achieved by ‘training’ the system.

In the past, the machine learning community has worked to train deep learning-based systems to recognise objects. For example, machines have been taught to differentiate between a quadruped animal and a human on their hands and knees. They can also identify the type of animal if trained to do so. In security and video analytics applications, the training can include teaching the system about behavioural traits, identification of individuals or specific types of vehicles, unexpected or unusual activities, etc..

This is important because it allows video analytics to be deployed with less incident-specific configurations. Instead, systems present end-users with a range of events and incidents. The user can then decide which of these are important and which are innocuous and therefore of little interest. This will ensure the system only identifies events of interest in the future, as the user’s selections of events of interest are used to enhance the training.

This is important because it allows video analytics to be deployed with less incident-specific configurations. Instead, systems present end-users with a range of events and incidents. The user can then decide which of these are important and which are innocuous and therefore of little interest. This will ensure the system only identifies events of interest in the future, as the user’s selections of events of interest are used to enhance the training.

Deep learning allows the system to be continually trained over a period of time. This means if it detects an object, activity or an event that it has not seen before, this can be flagged and presented to the end-user. They can then decide whether or not the system should continue to notify for such occurrences or ignore any similar incidents when they occur.

It is important to understand that deep learning is an intelligence-based technology, and will have an increasing number of applications as it is rolled out into common usage, including outside the security sector. As such, datasets for an increasing number of applications will be created, which will lead to solutions being available with a variety of capabilities.

For security applications, and specifically video- and audio-based surveillance tasks, it is vital that the technology is properly implemented. This makes it very important for end users to select partners – integrators and manufacturers – who have an established track record in delivering intelligent security technologies.

The stages of IVA processing

Object detection is the first stage in accurate video analytics. This process senses changes within a video stream by analysing differences between the individual frames in a series of images. This procedure is chiefly used to identify variables within the video data, and is not designed for precise detection.

The next stage of processing involves tracking. This detects moving objects within the video stream. This procedure is particularly suitable for detection tasks in applications where there are a low number of sources of disturbance.

Object classification is the third stage of processing, and enables differentiation between pre-defined types of objects (e.g. people, animals, vehicles, etc.) in a video stream. The objects in a video image are analysed and classified based on known and defined characteristics. These can then be tracked across a series of images.

This procedure is suitable for detection tasks in applications with various sources of disturbances. Object identification provides the ability to recognise specific object properties (e.g. faces, people, vehicle number plates, etc.) in a video stream. In this process, objects in a video image are analysed and identified based on pre-defined characteristics. This procedure is only suitable for use in specific conditions and for special applications.

This procedure is suitable for detection tasks in applications with various sources of disturbances. Object identification provides the ability to recognise specific object properties (e.g. faces, people, vehicle number plates, etc.) in a video stream. In this process, objects in a video image are analysed and identified based on pre-defined characteristics. This procedure is only suitable for use in specific conditions and for special applications.

Most IVA products will use the stages of processing already discussed. However, in order to increase accuracy and efficiency, object interpretation is increasingly considered as a vital stage in video analytics processing.

Object interpretation allows specific object states to be detected in a video image (such as behavioural traits, number of targets, proximity of targets, etc.). To this end, objects in a video image are analysed and their status is interpreted based on pre-defined criteria. This procedure, like object identification, is used in specific conditions and for special applications.

Scene interpretation is another important element of the processing strategy. It lays the foundations for analysing specific areas in a video image in different ways. For this purpose, the corresponding areas of the image are marked and defined. The level to which areas in a scene can be defined depends very much on the flexibility of the IVA in use.

In the most basic IVA packages, areas can be defined as alarm zones or non-alarm zones. With the more advanced options, other definitions can be applied. These can include perimeter areas, the frontage of buildings, works of art or other high value assets, railways platforms and track areas, etc..

These different areas are then analysed according to the desired priority and function. This procedure is designed for adapting the analytics modules to deal with specific application scenarios. It is a stage which is critical to good application-specific analytics deployments.

The final stages of processing are not carried out by the analytics engine. These are human interpretation and decision-making.

In addition to the various computational procedures, plus an array of other methods for automated processing and analysis of video images, such as image comparison, image pixilation and other evolving methods, the IVA remains a tool to assist users.

All these various procedures are designed to support human beings, not to replace them. Therefore human interpretation of the analysis results is always necessary. Moreover, if an event occurs, the necessary measures will only be initiated after verification by trained users.

Applying analytics

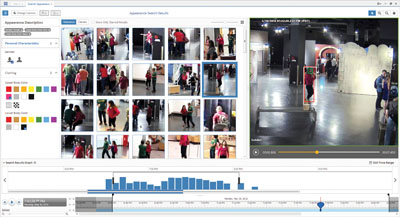

With IVA implementations, the first consideration is whether the system needs to detect general exceptions or very specific incidents. Some analytics engines have been created to detect motion in a defined area. The results can be filtered by a number of criteria, including direction and speed of travel, object size, whether it is a person or vehicle, colour information, point of appearance in the image, etc..

Such analytics are commonly available and require the filters to be configured for each video stream in order to function as expected.

Alternatively, very specialist video analytics can be specified which support tightly defined conditions, such as detecting a vehicle travelling in the wrong direction on a designated route.

While some basic IVA engines will be able to achieve similar results to the second more bespoke rule, it will require more effort to configure, and may not deliver the specialist performance of a bespoke system.

A final consideration is this: setting up IVA is not a one-time task. It will be necessary to set up the IVA and allow it run to generate alerts. These then need to be assessed and adjustments made to eliminate nuisance alarms while preserving a high degree of catch performance. Without proper configuration, accuracy will suffer!