Facial recognition technologies have been trialled in a variety of security and safety applications in the past few years, with the schemes having varied success. While the technologies have been included in a range of dedicated applications from the very basic right up to advanced and complex critical solutions, often their effectiveness has depended upon the expectations of the user and the fitness for purpose of the technology in any given application. With the advent of AI-enabled systems, is it time to rethink how the benefits can be realised?

One of the most effective ways of identifying individuals, whether for security or site management purposes, if through facial information. As far as humans go, recognition of people based upon their facial details is both intuitive and well practised. It’s something we’re all used to doing, and it’s one reason that in many applications, the involvement of an operator remains key.

While facial recognition is intuitive for the majority of humans, the processing and storage can be slow. Faces might be identified but recalling additional information can take time. How many times do people know someone’s face, but struggle to remember exactly who they are. This hesitancy isn’t what is needed in a critical situation. If human’s are capable of such problems with an average number of people, the situation quickly deteriorates when faced with hundreds or thousands of individuals passing through a secure area.

Facial recognition is a cognitive skill that humans are very good at. Processing data and recalling stored information are things that computers are very good at. For many years the challenge has been to bring the two together in an effective and efficient way. With the understanding that it is more realistic to teach a machine to recognise faces than to teach people to handle thousands of computational commands every second, the race to improve facial recognition systems has been ongoing for some time.

Historical inaccuracies

Accuracy has always been a challenge for facial recognition, because the human face includes so many variables. Because of processing limitations in the early days of facial recognition, a restricted number of criteria were used to define any given face. This usually included plotting certain characteristics, mapping them and using general details such as skin tone, hair colour, etc., for filtering.

Obviously, given the low level of information used, the results weren’t highly accurate. The levels of false accepts and false rejects were unacceptable, but at that time these had to be considered alongside the stage which the technology’s development had reached.

Obviously, given the low level of information used, the results weren’t highly accurate. The levels of false accepts and false rejects were unacceptable, but at that time these had to be considered alongside the stage which the technology’s development had reached.

When using very basic plotting to identify a face, there are too many conditions which can impact on the mapping. For example, the dimensions of a face template created by plotting the eyes, nose and mouth (the three main elements in the earliest implementations) change if the subject is static, talking, sneezing or yawning. The fact is the fewer plotting points used, the more errors will occur.

One of the hurdles facial recognition had to overcome in the past few decades was high profile failures during assessments. It must be acknowledged that in the majority of cases, it wasn’t that the technology was unable to do what it had been designed to do, but more a case of those judging the technology having unrealistic expectations of what it could and could not achieve.

There were some performance issues because the technology was at a very early stage in its lifecycle, and some problems which came to light during trials either couldn’t be corrected or were due to the systems being used in a different way to that which their capabilities allowed.

However, too many end users (and a significant and vocal segment of the general public) believed facial recognition could pick out a single individual in a crowded scene, accurately identify them to the police or other relevant authorities, and deliver an in-depth history of their movements. For those who wanted such capabilities, the general consensus was that the technology had failed when this wasn’t achieved.

Opponents of the technology, however, seemed convinced the systems did achieve all these things and more, and claimed the technology violated their right to privacy.

Even today, one of the most often referenced failures of facial recognition is the trial which took place in Newham during the late 1990s. Launched with much fanfare, the facial recognition system became the object of fierce debate between the local council, the police and various human rights organisations.

The mass media jumped on claims that during its trial period, the system never actually identified a single person correctly without giving any explanation of the trial’s criteria and methodology.

As is made obvious by Moore’s Law, the system installed two decades ago would be positively archaic today in terms of its computational capabilities. Processing power doubles approximately every 12 months, which means in the interim period it has become common for low cost home computing devices to have more power than the system used in the trial.

Since then, the technologies used for facial recognition and facial detection have moved on in leaps and bounds. Processing power is no longer a limitation and the cost of storage has been significantly reduced. Growth in the general availability of GPU-based systems have enabled genuine implementations of artificial intelligence (AI) and deep learning. Providers of video analytics have moved beyond facial plotting. If anything, the time is right for the benefits of facial recognition and facial detection to be exploited.

However, use of the technology still raises a significant debate about privacy, and there remains much misunderstanding about how the technology is used.

A subtle difference

When it comes to facial-based smart applications, there are two methods in widespread usage which are very different in terms of implementation and achievable results. These are facial detection and facial recognition. The definition is implicit in the terminology: one detects the presence of human targets by identifying an object with a human face is in the image, while the other recognises (searches and identifies occurrences of) faces which have been stored in a database, either as apart of a white- or black-list. While very different, both in terms of technology and application, the technologies are often confused by people who are not security professionals, especially when considering what the systems will and will not be capable of doing.

Both technologies have a similarity, in that they initially need to be able to detect there is a human face within the viewed scene. The traditional method for achieving this is to use triangulation, making use of facial features (eyes, nose and mouth) to identify the patterns inherent in a human face. However, with the growing use of AI and deep learning in many smart video functions, this approach is somewhat archaic (although it can be effective for general detection).

Both technologies have a similarity, in that they initially need to be able to detect there is a human face within the viewed scene. The traditional method for achieving this is to use triangulation, making use of facial features (eyes, nose and mouth) to identify the patterns inherent in a human face. However, with the growing use of AI and deep learning in many smart video functions, this approach is somewhat archaic (although it can be effective for general detection).

AI can effectively be taught to recognise people in a scene and can track them until a suitable facial image is captured for identification purposes. This approach is more fluid and can deliver superior results, but it also requires increased processing capabilities.

The significant difference between detection and recognition becomes evident once the presence of a face has been identified.

Facial detection is just that: the algorithm detects that a face is present in a scene and this information is then used to trigger a pre-defined action or to alter the system configuration. This might sound somewhat limited, but the potential on offer delivers a cost-effective way to obtain maximum efficiencies from a surveillance system.

For example, video could be recorded making use of a lower frame rate or reduced image resolution when there is no activity, or activity which does not provide a positive identification of individuals in a scene, i.e. when no faces are detected. However, both the frame rate and resolution could be increased when a face is detected.

Video which includes face-based information could be automatically bookmarked or flagged as footage of interest, making any searches following an incident a simpler task. It is also possible to use the presence of a face in a viewed scene to dynamically create a region of interest, allowing a higher level of automation to be implemented.

Motion-based searches can also be filtered to ensure they include images capable of allowing identification. For example, specifying a motion search on a doorway would show any activity in the selected area, regardless of whether an individual could be identified from it. By applying a filter based upon facial detection, this would ensure that the search only returned footage where a person could potentially be identified from the video information.

Facial recognition makes use of facial detection in the first instance. It then creates a ‘template’ from the facial information and compares that with stored data to ascertain whether the individual is registered in the system’s database. This allows the creation of white-lists (persons who are permitted to be in the location) and black-lists (people who are not authorised to be there).

Facial recognition makes use of facial detection in the first instance. It then creates a ‘template’ from the facial information and compares that with stored data to ascertain whether the individual is registered in the system’s database. This allows the creation of white-lists (persons who are permitted to be in the location) and black-lists (people who are not authorised to be there).

Facial recognition is more processor-hungry than facial detection. It uses detection methods to identify the presence of a face, but then creates a template and searches the database for a match that falls within the prescribed thresholds to ensure accuracy. If the thresholds are minimised, searching takes longer but is more accurate. Maximising the thresholds makes the process faster but will lead to a greater number of false accepts or incorrect matches.

Recent advances in smart video search technology have also seen facial recognition used more widely when looking for associated video clips. When used in this way, facial recognition does not compare captured facial templates to a database. Instead it uses faces selected by an operator to search through archived and live footage, seeking other instances where similar faces appear.

AI-enabled solutions

In recent years the development of facial recognition technologies has, much like other video analytics, advanced significantly. Algorithms to detect faces have improved and accuracy has reached a level that makes it suitable for an increasingly wide range of applications.

Despite increases in processing speed over the many years the technology was being developed, facial recognition – much like many video analytics – only used very small amounts of the data gathered by the system. Most facial recognition applications used a limited number of streams, and predominantly worked with live feeds. However, this situation has changed with the introduction of GPUs in a growing range of video hardware and servers.

Despite increases in processing speed over the many years the technology was being developed, facial recognition – much like many video analytics – only used very small amounts of the data gathered by the system. Most facial recognition applications used a limited number of streams, and predominantly worked with live feeds. However, this situation has changed with the introduction of GPUs in a growing range of video hardware and servers.

Because of the huge increase in processing cores, GPU-based AI and deep learning not only enhances the performance of facial recognition systems but also enables in-depth forensic searches to be carried out across multiple streams on large and distributed systems. The searches can be historical, in that they can include archived data as well as live streams.

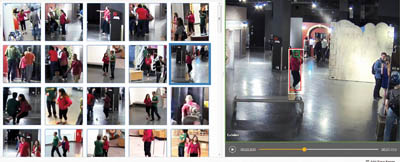

When an individual is identified, the operator can search for all instances in which they appear. The system will find the most obvious matches; these may be further refined using other information such as the colour of clothing.

As the system delivers matches, the operator can select those that are correct and reject any that not. The system will then use the accepted instances to further learn about the individual being searched for. This enables facial information captured at different angles to be included in searches.

As the results continue to teach the system about the target’s facial information, supplementary data such as clothing colour becomes less critical. This can result in appearances of the individual from other periods to be flagged if any exist.

The speed of the search and the fact that the system is learning more about the facial information means it can also identify any appearances of the individual on live streams, essentially tracking them around the site if they are still at the location.

The speed and ease with which the system can search, coupled with the ability to constantly learn more about the facial data being searched for, allows the technology to be used for a wider range of applications.

Security is an obvious use. The ability to identify suspects, build an audit trail of their activity prior to an event, and even search for them in real-time, empowers operators and allows black-lists to be managed on-the-fly rather than retrospectively.

However, facial recognition could also be used for access control. Currently, biometric technologies are used for contactless access control, but these often require users to stop and wait while scanning takes place, usually at a fixed-point terminal. This can create bottle-necks. Facial recognition can be carried out at a greater distance, while people are on the move.

Another option, and one which has been deployed by a number of airports, is to use facial recognition for status reporting. Capturing facial information allows the airport management to assess flow through the facility.

As passengers disembark from aircraft, facial information of random individuals is captured. This then provides information about how long it takes for passengers to clear immigration, baggage collection and customs. It can also be used as people enter the airport to qualify times for security checks, transfers, etc.. Once the journey through the facility is complete and a report generated, the facial information can be discarded, safeguarding privacy.

Another area that could benefit from AI-based facial recognition is the care of vulnerable people. With an aging population with needs for an increased levels of support, facial recognition could be used to ensure their status and location is reported on a regular basis, enabling carers to respond should any of them find themselves in difficulty.

In summary

Facial recognition has had a challenging period of development, and because of the resources needed to make it effective it developed a reputation – albeit an incorrect one – for not being effective. However, the increased use of GPUs – and the subsequent sea-change in processing capabilities – mean that the technology now offers a valuable and flexible tool for many applications.

Using the right facial analytics processing engine, coupled with GPU-enabled servers, delivers a tool that empowers users, added real-world benefits and creates a smart solution that exceeds user expectations.

There is a down-side, in that facial recognition is often used by privacy campaigners as the ‘poster technology’ to illustrate privacy invasions. However, it remains an essential filter for searching and enables a number of value-added benefits which can be positive for the data subjects. As a result, care must be taken when implementing facial-based analytics to ensure complete compliance with relevant data protection legislation, and to retain user support for the technology.