Today’s technology-based systems offer a wide range of benefits, including a high degree of flexibility with regard to system topologies. The ability to create bespoke solutions which can neatly dovetail with any application’s infrastructure is a value added bonus, but it creates a need to decide where the intelligence in any system resides. In order to remain cost-effective, it is important to ensure systems don’t end up being over-specified.

One of the most significant benefits of a modern smart solution is the flexibility to locate resources where they can deliver optimal impact. Whilst older technologies often created a need to bring all data back to a central location for processing and management, today’s use of networked infrastructure makes system design and deployment simpler and more cost-effective because of the enhanced design flexibility on offer.

Back in the days when the solutions sector relied on analogue technology, one of the biggest restrictions was that individual devices required dedicated cabling. Each device required its own separate connection, and data usually flowed in a single direction.

The inability of devices to share infrastructure connections meant each individual link required at least one dedicated cable, and each point where data needed to be shared required some sort of switch or interface. As a result, often the easiest thing to do was bring all the connections back to one central point – usually a control room – and manage the systems functionality from there.

The downside to this approach was that system design options were limited, integration became increasingly difficult to achieve, and potential cost efficiencies were lost. However, at the time these weren’t considered as limitations in the use of technology, because the vast majority of systems suffered the same issues. It was the way thing were.

Technology-based industries never opted for a centralised approach because it was the best option, nor because it was cost-effective. If anything, it was an approach fraught with difficulty, and it often increased costs while also wasting time and energy in the field. It was a necessity.

The most obvious issue with a centralised approach is that it includes a single point of failure by design. It also requires an increased amount of cabling, with excessive runs often becoming the norm.

Interestingly, end users rarely equated increased costs with lengthy cable runs. Customers saw value in the hardware, not the infrastructure, and this often made it difficult for integrators to justify high installation costs.

The centralised approach also created limitations in terms of performance; data processing and information management invariably suffered. With all the site’s data being handled in one place, it was often inevitable that available data capacities would be insufficient. This led to a whole range of ways of ‘managing’ the situation, which invariably meant potentially valuable data would be thrown away to free up the system’s resources.

For many years the centralised approach was – and for some installations still is – the predominant model when designing systems. In the past, the available technology simply didn’t allow any other approach to be achieved for a realistic cost. Not only did every device require a dedicated cable, but many centralised devices supported a limited number of inputs.

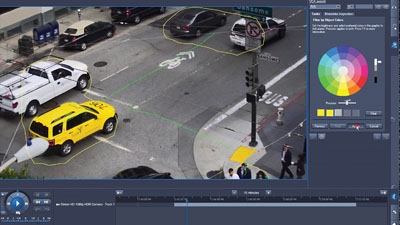

Security Operations Center

A great example of this comes when you consider how video-based systems archived captured data. Video feeds from multiple cameras would be brought back to a centralised point, where banks of recorders would archive the footage. The recorders could only handle a single input, so management units, typically switchers or multiplexers, converted the multiple video feeds into one single stream.

The problem was that 16 video streams had to be merged into one, so the result was a single field from each stream was retained. This not only meant the fifteen fields between each captured field were thrown away, but the quality was poor as a field was only half of an interlaced frame. Add to this the fact that tapes only recorded three hours of footage, and time-lapsing had to be used to further reduce the amount of video recorded so a tape would last for 24 hours.

This loss of potentially important data was a result of the limited topology, and forced designers of surveillance systems to adopt a centralised recording system.

It must be said that whilst a centralised approach was often forced on system integrators due to limitations of analogue technology, the mindset of centralising control for systems still continues to this day. In some cases there is a good argument for a centralised approach, but in many cases it isn’t necessary, nor is it desirable.

Having said that, when smart systems are considered, the debate as to whether a centralised approach makes sense changes. This is because the intelligence in the system makes use of a host of different data sources, and as such a centralised approach allows information from a variety of sub-systems to be pulled to one core system location for analysis.

Moving to the edge

The almost universal migration to networked-based platforms has allowed systems to utilise ever more flexible topologies, delivering economies with regard to cabling and overall design. This has become increasingly important as the data being collected by smart systems is increasing all of the time, to a point where bandwidth and storage consumption are something which system integrators and end users increasingly need to address.

The collection, analysis and use of ‘big data’ is a reality in today’s smart solutions. The information being gathered not only has value for enhancing the protection of businesses and organisations; it also has a value for a host of other purposes. These include site management, business efficiencies, process control, HR, communications, health and safety, marketing, etc..

The collection, analysis and use of ‘big data’ is a reality in today’s smart solutions. The information being gathered not only has value for enhancing the protection of businesses and organisations; it also has a value for a host of other purposes. These include site management, business efficiencies, process control, HR, communications, health and safety, marketing, etc..

It also needs to be considered that data today comes in a variety of formats. Traditional alphanumeric data is still a vital part of the data management landscape, and remains relatively simple to transmit, process and store. However, the depth of such data is increasing as users look to leverage every opportunity they can.

Video is a great enabling technology, and delivers benefits in a number of roles. From security and safety, through to process monitoring, site control, traffic and flow regulation, customer attraction and marketing, video adds value and generates efficiencies for most businesses and organisations.

However, despite all the good things which video can deliver, it remains a ‘heavy’ data source. It requires significant bandwidth and storage, and therefore management of video needs consideration during the design phase. The metadata which often accompanies video also needs to be factored in.

Metadata is, in very simple terms, data that describes data. Metadata contains detailed information about multiple aspects of the captured video. Because of this, it can be used to make analytics processing and tracking more efficient.

By way of an example, metadata about a video stream would include a wide range of information, such as image size and resolution, colour depth, frame rate, creation date, plus other data such as the camera type, various settings and configurations, and in some cases the geolocation data which pinpoints where the camera is when the recording is made.

Where metadata gets more interesting for those seeking to gain benefits from analytics is that it also contains information about what is happening in the video. Changes in the stream data are recorded, such as the size, shape and colour of an object, the time it spends in the video scene along with direction and speed of motion, global and local scene changes, etc..

While metadata is alphanumeric, it still adds to the overall system load.

Audio is increasingly becoming part of the big data landscape. Whether transmitting messages to areas within a site, allowing personnel to communicate with others on site or for general local and wide area communications, audio adds value to smart systems.

Like video, audio data can be ‘heavy’, in terms of the bandwidth required for transmission, processing load and storage.

As the focus on data mining increases, the additional value of what can be achieved often underpins the return on investment many end users are seeking. It is therefore imperative that modern smart systems are capable of allowing the data to be interrogated for a whole host of reasons, and often the best place to do this is at the edge of the network, removing the need to transmit and process data of little or no interest.

Taking a step back for a moment, many integrators will have first looked at edge-based technologies in regard to data storage. It was the first business use case widely introduced into the market, and it still makes a lot of sense. Of course, storage isn’t the only thing which can be done at the edge, and with moves to service-based systems, often the preferred location for storage is off-site.

It pays to remember that edge solutions are not all the same, and it is important to not undervalue the principle because some edge options are low key. Edge does nor define a technology; it merely indicates that a task – analysis, processing, storage, reporting – is happening out on the network rather than at a central common location.

For example, recording video onto SD cards in the camera is an edge option, but equally AI-enabled analytics and object classification can take place at the edge. Increasingly, the leading IT-driven data management and analytics providers are moving their processing to the edge, because it is more efficient, more secure and it makes good sense. Why push large amounts of data around a network when the processing and analysis can happen at the source of data capture or generation?

The constantly increasing demand for data, including video and audio streams, transactional information, metadata, status reports, process control data, HR information, sales trends, business intelligence, etc., creates a significant network load and places a greater emphasis on the resilience of the supporting infrastructure. As a result, it makes sense to ensure much of the ‘heavy lifting’ in terms of data processing and analytics algorithm management is performed at the edge of the network.

This localises the processing load as data is managed either at the capture device or in a peripheral unit, rather than transmitting large amounts of traffic to a central server which handles all management tasks simultaneously.

As smart data plays a greater role in site and process management for businesses and organisations, it becomes vital that continuity is ensured. Any failure or weakness in the infrastructure can only result in performance issues and the loss of continuity, which in turn will impact on the total cost of ownership and return on investment for the business or organisation in question.

However, it is also important to ensure pushing services to the edge does not detract from the operational requirement for the solution. A distinction needs to be made between the core role of the system and the peripheral benefits. While it is imperative the system offers the expected performance and reliability for the business or organisation, the customer’s perceived return on investment will come from the additional benefits and the added value on offer. If that is compromised, their thinking could be a return to the ‘grudge purchase’ mentality.

While issues with the peripheral benefits might not compromise the overall performance on offer, the added value elements are the ones the user interacts with on a daily basis. As a result, issues with realising those benefits can lead to the smart system not meeting their various expectations.

The smart dilemma

The increased use of artificial intelligence (AI) in smart solutions, along with the processing power of combining CPUs and GPUs, does mean the load on networks and data processing in general will increase, making effective infrastructure management a more critical issue.

As users look to exploit metadata-rich information and combine it with data from other sources, the big data will enable ever smarter implementations to be created. However, because much of the data is generated in real-time, around the clock, from an ever-increasing number of devices, the sheer volume being captured, transmitted, processed and analysed can be staggering.

As users look to exploit metadata-rich information and combine it with data from other sources, the big data will enable ever smarter implementations to be created. However, because much of the data is generated in real-time, around the clock, from an ever-increasing number of devices, the sheer volume being captured, transmitted, processed and analysed can be staggering.

As the number of smart devices increases, those designing solutions are often faced with a choice: where to put the intelligence. Is the smart technology best deployed at the edge, allowing decisions to be made in the field, or is it preferable for the intelligence to reside in a core central location, making use of data from numerous sources? The answer depends upon what the goal of the solution is.

Few businesses and organisations are looking for one solution which manages every single aspect of their business; it would be foolhardy to do so. Even with advanced technology, certain systems are more relevant to a specific set of tasks. Where the management of a site is considered – security, safety, power management, lighting, heating, process control, etc. – complementary technologies can be grouped together to create an intelligent solution.

If a centralised approach is taken, the infrastructure will need significant computational resources, storage capacity and communications redundancy. This will add to the complexity of systems, as well as the capital investment and cost of ownership. The use of unsuitable hardware and infrastructure may lead to data loss, latency, slow or incomplete processing cycles or – in a worst-case scenario – failure of the entire system.

With a trend towards managing the burgeoning data flow using edge-based processing and analysis, it is critical correctly designed infrastructure is in place. This helps to reduce the overall network load by implementing data processing at the source of the information.

As the processing happens at the edge, the need for data transfer is reduced, freeing up bandwidth and enhancing overall system efficiency. This approach not only reduces costs but also enhances cybersecurity as data is not moved around the network for centralised processing to take place.

Edge-based management also reduces the risk of total data loss, and simplifies compliance with important data management policies, as usage is kept to a local level.

In summary

Integrators and end users creating advanced analytics systems and smart solutions cannot allow bandwidth and data transfer issues to jeopardise the functionality and resilience of the system. Equally, if the system is to meet the expectations of businesses and organisations, it should be able to consistently deliver the additional smart benefits which have been sold.

An edge-based approach saves money, reduces network load, offers a more stable solution and reduces the potential impact of system failures. In short, edge-based systems increasingly represent best practice!